Why AI Can't Tell You How Your Developers Are Really Doing

AI can reason brilliantly over your engineering data, but it can only work with what it's given — and commit logs, tickets, and PR histories were never designed to capture what developers actually do between picking up a task and pushing their first commit. That behavioral layer, collected at the IDE level, is the missing data that separates a guess from a real answer, and it's exactly what CodeTogether captures. As AI tools reshape how software gets written, the teams that pull ahead will be the ones who can actually measure what's working.

The question makes sense on its surface: "Can't I just ask an AI?"

Connect Claude or Gemini to your repos, your project management tool, your sprint data, and ask for a read on your engineering team. Why pay for a dedicated analytics product when AI can apparently answer anything?

Here's the problem. AI can only reason from the data it has. And the data most engineering teams are feeding it was never designed to capture what developers actually do between picking up a task and pushing their first commit. The reasoning is fine. The data is the issue.

The map problem

Think about the last time you went for a walk. You know you walked two miles because you've done that loop a hundred times. That's all you know. You got from start to finish. Done.

Now imagine someone asks you: "How did your walk go?"

You could tell them a lot. That big hill in the middle that always burns your legs. The point where you needed water. How your pace slowed in the last quarter mile. There's a whole story in that walk — but none of it appears on the map. The map just shows a two-mile loop.

This is exactly the situation any AI analysis of your engineering team is in. It has the map. It doesn't have the walk.

Engineering metrics: what AI can see vs. what actually matters

When you ask an AI to analyze your development team, it works with what it can access: pull request rates, lines of code accepted, ticket velocity, deployment frequency. These are real developer performance metrics. They matter. But they're the map.

What happens in between — the actual work of software development — is invisible.

Consider a simple example. A developer receives a task. The requirements are vague. They spend two days exploring the codebase, writing code in the wrong direction, realizing the mistake, starting over. Eventually they deliver something solid.

From every metric your AI can see, this looks like a perfectly normal sprint. Task assigned, task completed, time elapsed: reasonable.

The AI has no idea that half that time was wasted because of a poorly written ticket. It can't see the five rewrites. It can't flag that your task clarity process needs work. Because all it sees is that the task got done.

This isn't a failure of AI reasoning. The reasoning is fine. It's a data problem.

The Fitbit didn't exist yesterday

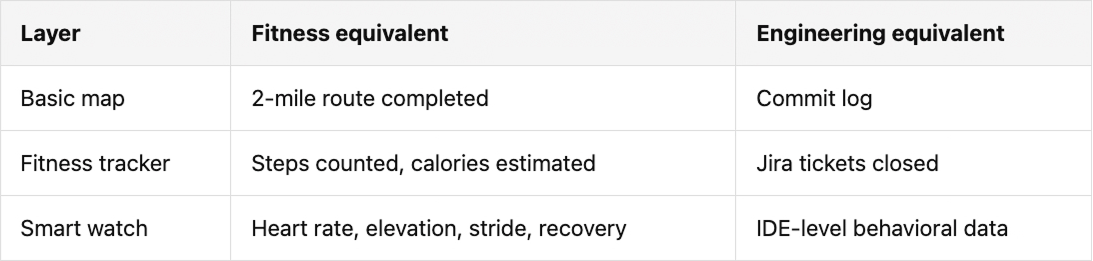

Let's extend the walking analogy. For most of human history, if you wanted to understand your fitness, you had the map: total distance, maybe total time. Then fitness trackers arrived.

Suddenly you could see steps. Then heart rate. Then elevation gain, stride length, sleep quality, recovery time, caloric burn correlated with food intake. Each new layer of data unlocked a deeper layer of insight — and a more specific, actionable answer to "how am I doing, and what should I change?"

The Apple Watch doesn't just tell you that you walked. It tells you you're starting your runs too fast based on your heart rate data. It tells you your performance drops when you sleep under seven hours. It surfaces things you didn't know to ask about yourself, because it has data you didn't know you were missing.

Here's how that maps to engineering analytics:

Now scale this to an engineering organization. Some engineers reach flow state in 30 minutes; others take 4 hours. Task clarity, meeting load, and context switching all play a role. Top performers maintain a controlled pace early; the ones who burn out go too fast, too early. These same patterns exist in your engineering team.

Imagine being able to see all of that — not just whether a developer delivered, but how they delivered, and not just for one person, but across your whole team, so you can identify what working practices actually drive better outcomes, and which ones quietly drain efficiency.

That's the difference between an AI working from peripheral engineering metrics and one with access to real developer productivity analytics built on behavioral data collected at the source.

Why AI-driven developer performance analysis falls short

Here's the direct answer to the question engineering leaders are asking:

AI tools like Claude or Gemini are extraordinary reasoning engines. Given good data, they produce good insights. But no matter how deeply integrated you make them with your existing systems, they're still reasoning from the metrics those systems were designed to capture: commit logs, issue trackers, code review histories.

None of those systems were designed to capture what a developer actually does between picking up a task and pushing their first commit. That behavioral layer — the layer that contains the why behind every outcome — doesn't exist in any platform AI can crawl.

So when you ask an AI why your PR acceptance rate is low, it can look at your PRs and make some inferences. It might notice patterns in review comments, or flag that certain developers' submissions consistently need multiple rounds. But it can't tell you that the root cause is upstream: that the tickets those developers are receiving aren't specific enough to act on. It doesn't know that because nobody captured it.

The same blind spot applies to AI tooling itself. Some engineers get more output and cleaner code from AI assistants; others copy-paste without context. You can't tell the difference without the right underlying data.

Without the right data, even the best AI is guessing at causes from effects.

The measurement problem just got urgent

The data gap in software development isn't new. Engineers have always done their most important work in ways that were never measured: the thinking, the experimenting, the collaborating, the recovering from wrong turns.

But the stakes just got significantly higher.

As AI tools become central to how software gets written, the invisible layer matters more, not less. Which engineers are genuinely accelerating with AI, and which are struggling quietly? Is AI tooling helping your junior developers grow, or creating a dependency that masks gaps in their understanding? How do you make the case — or the argument against — a significant AI tooling investment when you can't measure what's actually happening in the IDE?

These are the questions engineering leaders are being asked to answer right now. And you can't answer them with the engineering metrics you have today. The data doesn't exist — unless you're collecting it.

Measuring the walk, not just the map

Every sprint ends the same way: a number. Story points completed vs. planned. But that number never tells you what got in the way.

A customer emergency derailed the sprint. Requirements weren't clear. A meeting broke a flow session. These structural problems impact delivery but never show up in Jira, GitHub, or your standup notes. All you see at the end is: story points short.

The engineering teams that pull ahead in the next few years won't just be the ones with the best AI tools. They'll be the ones who can actually measure what's working.

That requires developer productivity analytics built on data collected where the work actually happens: in the development environment, at the level of developer behavior, before the commit. Data that captures not just what got built, but how — and at what cost.

With that foundation, AI analysis stops being a guess and starts being genuinely useful. You can ask meaningful questions and get meaningful answers. You can identify what's driving developer effectiveness and what's undermining it. You can build an engineering organization that improves deliberately, not just ships consistently.

CodeTogether captures the behavioral data that standard development tools miss: the layer of developer activity that happens before the commit, not to assign blame, but to give you the data to actually change something. If you're trying to make the case for or against your AI tooling investment, the data your current stack is missing is exactly what we collect.

Bring CodeTogether to your team

CodeTogether integrates directly into your existing process so there's no need to change the way you work.

%201%20(1).avif)

.svg)